A useful skill set to have as an engineer is to recognize when communication between devices has broken down. Sometimes voice servers in particular need a kindly admin to step in and smooth over their cluster relationships; unfortunately, however, you’ll find they are just too ashamed to ask for help.

So here is a list of signs to help you tell when your UCCX servers have gone from perfectly compatible to particularly petty, but haven’t bothered to tell you about the upset.

Supervisors cannot listen to recordings.

In Cisco Agent Desktop an agent no longer see his/her call stats in the call log.

Historical reports gives an error message that no data exists for valid date ranges.

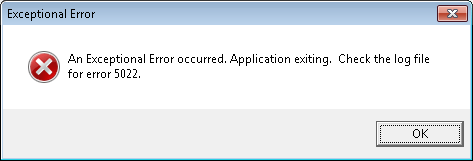

Historical reports tells you how exceptional you are with an Exceptional Error.*

If you see one or more of these symptoms, it’s likely one of your uppity UCCX servers has told the other he was taking his toys and going home. You can confirm this in one of several ways:

On versions below 8.x, check out the Data Control Center – both the Historical and Agent will likely display this gem of an error message: Error occurred while performing the operation. The cluster information and subscriber configuration does not match. The subscriber might be dropped (Please check SQL server log for more details).

On versions 8.x and above, you have a couple of options:

Go to UCCX Serviceability -> Tools -> Control Center – Network Services-> and see if the Cisco Unified CCX Database is showing as Out Of Service for either node.

OR

Navigate to Tools -> Database Control Center -> Replication Servers and you will likely be greeted with this happy little declaration (in case you can’t read the message below, it starts with the phrase Publisher is DOWN, happy indeed):

So what do you do if your UCCX servers are indeed giving each other the silent treatment? Well, unfortunately, I’ve found that nothing short of rebooting cures this particular ailment. Sure you can click that “Reset Replication” button (after hours, of course) but it’s about as effective as hitting the elevator button over and over hoping that’ll make it come faster – really people, it doesn’t help! So just go ahead and plan that maintenance window to reboot the primary, followed by a reboot of the secondary.

But wait, there’s more!

If you noticed this issue because you are exceptional and your Historical Reports makes wild unsubstantiated claims that no data exists, just check out the solution under No Data Available in the Historical Reports in this link because there are a few more hoops to jump through:

http://www.cisco.com/en/US/products/sw/custcosw/ps1844/products_tech_note09186a0080b42524.shtml

Yep, you get to uncheck boxes and recheck boxes, and THEN reboot! The fun just keeps on coming!

So once your surly servers get an attitude adjustment in the form of a reboot, you’ll find that they have an amazing ability to forgive each other and everyone can now rejoice in cluster harmony.

*On one of my encounters with this issue I was lucky enough to generate not just an error, but an Exceptional Error. Still makes me laugh. Yep, still easily amused.

Published: 01/30/2012